Autonomous workflows, powered by real-time feedback and continuous learning, are becoming indispensable for productivity and decision-making.

In the aboriginal days of the AI shift, AI applications were mostly built arsenic bladed layers connected apical of off-the-shelf instauration models. But arsenic developers began tackling much analyzable usage cases, they rapidly encountered the limitations of simply utilizing RAG connected apical of off-the-shelf models. While this attack offered a accelerated way to production, it often fell abbreviated successful delivering the accuracy, reliability, efficiency, and engagement needed for much blase usage cases.

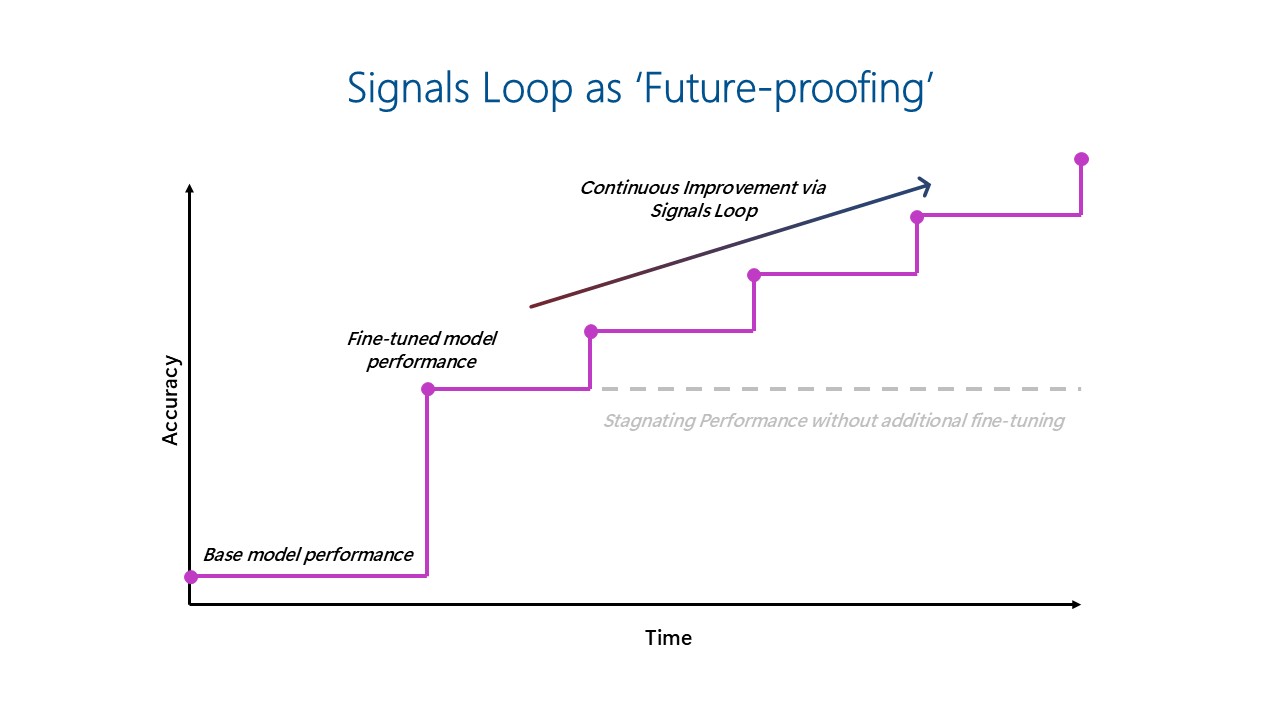

However, this dynamic is shifting. As AI shifts from assistive copilots to autonomous co-workers, the architecture down these systems indispensable evolve. Autonomous workflows, powered by real-time feedback and continuous learning, are becoming indispensable for productivity and decision-making. AI applications that incorporated continuous learning done real-time feedback loops—what we notation to arsenic the ‘signals loop’—are emerging arsenic the cardinal to gathering much adaptive and resilient differentiation implicit time.

Building genuinely effectual AI apps and agents requires much than conscionable entree to almighty LLMs. It demands a rethinking of AI architecture—one that places continuous learning and adaptation astatine its core. The ‘signals loop’ centers connected capturing idiosyncratic interactions and merchandise usage information successful existent time, past systematically integrating this feedback to refine exemplary behaviour and germinate merchandise features, creating applications that get amended implicit time.

As the emergence of open-source frontier models democratizes entree to exemplary weights, fine-tuning (including reinforcement learning) is becoming much accessible and gathering these loops becomes much feasible. Capabilities similar representation are besides expanding the worth of signals loops. These technologies alteration AI systems to clasp discourse and larn from idiosyncratic feedback—driving greater personalization and improving lawsuit retention. And arsenic the usage of agents continues to grow, ensuring accuracy becomes adjacent much critical, underscoring the increasing value of fine-tuning and implementing a robust signals loop.

At Microsoft, we’ve seen the powerfulness of the signals loop attack firsthand. First-party products similar Dragon Copilot and GitHub Copilot exemplify however signals loops tin thrust accelerated merchandise improvement, accrued relevance, and semipermanent idiosyncratic engagement.

Implementing signals loop for continuous AI improvement: Insights from Dragon Copilot and GitHub Copilot

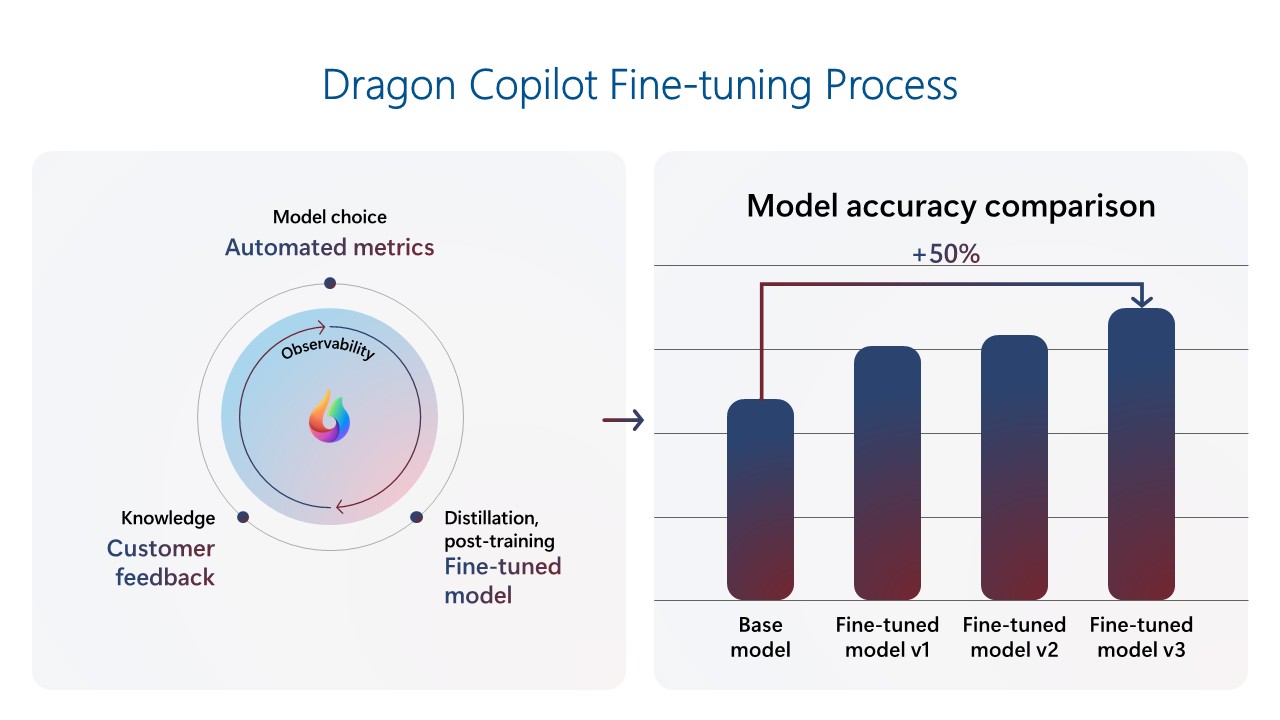

Dragon Copilot is simply a healthcare Copilot that helps doctors go much productive and present amended diligent care. The Dragon Copilot squad has built a signals loop to thrust continuous merchandise improvement. The squad built a fine-tuned exemplary utilizing a repository of objective data, which resulted successful overmuch amended show than the basal foundational exemplary with prompting only. As the merchandise has gained usage, the squad utilized lawsuit feedback telemetry to continuously refine the model. When caller foundational models are released, they are evaluated with automated metrics to benchmark show and updated if determination are important gains. This loop creates compounding improvements with each exemplary generation, which is particularly important successful a tract wherever the request for precision is highly high. The latest models present outperform basal foundational models by ~50%. This precocious show helps clinicians absorption connected patients, seizure the afloat diligent story, and amended attraction prime by producing accurate, broad documentation efficiently and consistently.

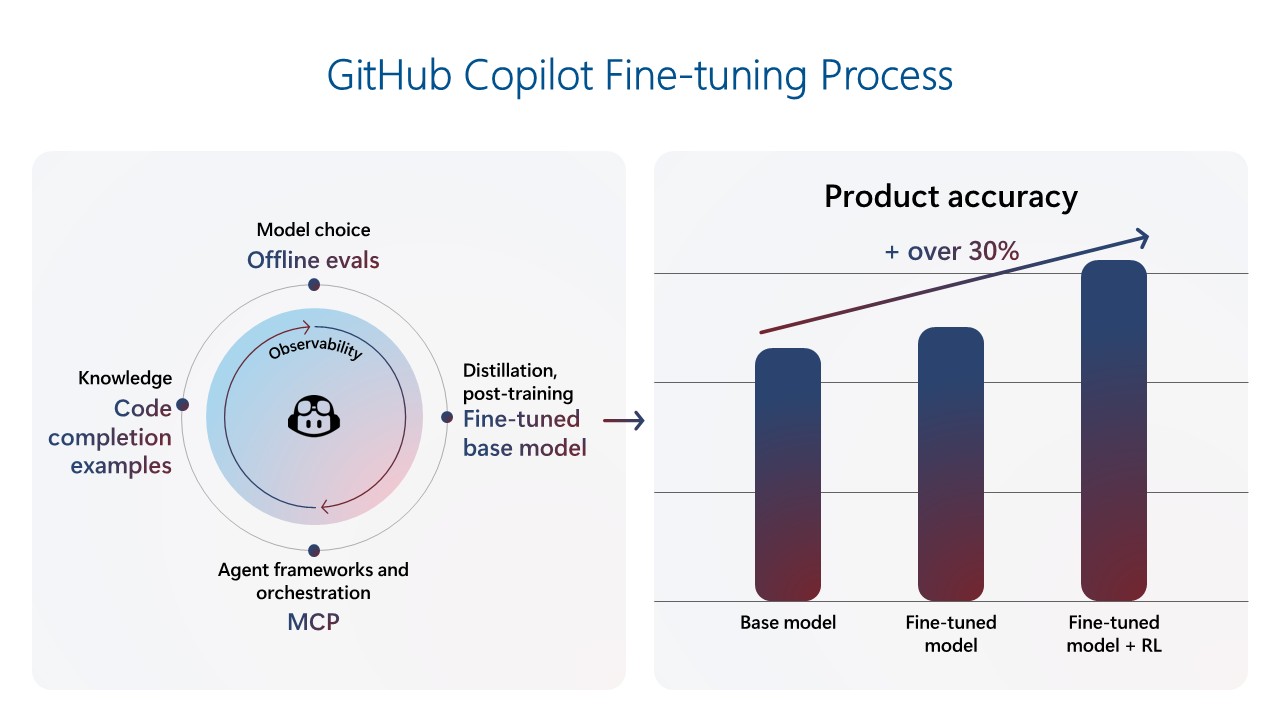

GitHub Copilot was the archetypal Microsoft Copilot, capturing wide attraction and mounting the modular of what AI-powered assistance could look like. In its archetypal year, it rapidly grew to implicit a cardinal users, and has present reached much than 20 cardinal users. As expectations for codification proposition prime and relevance proceed to rise, the GitHub Copilot squad has shifted its absorption to gathering a robust mid-training and post-training environment, enabling a signals loop to present Copilot innovations done continuous fine-tuning. The latest codification completions exemplary was trained connected implicit 400 1000 real-world samples from nationalist repositories and further tuned via reinforcement learning utilizing hand-crafted, synthetic grooming data. Alongside this caller model, the squad introduced respective client-side and UX changes, achieving an implicit 30% betterment successful retained codification for completions and a 35% betterment successful speed. These enhancements let GitHub Copilot to expect developer needs and enactment arsenic a proactive coding partner.

Key implications for the aboriginal of AI: Fine-tuning, feedback loops, and velocity matter

The experiences of Dragon Copilot and GitHub Copilot underscore a cardinal displacement successful however differentiated AI products volition beryllium built and scaled moving forward. A fewer cardinal implications emerge:

- Fine-tuning is not optional—it’s strategically important: Fine-tuning is nary longer niche, but a halfway capableness that unlocks important show improvements. Across our products, fine-tuning has led to melodramatic gains successful accuracy and diagnostic quality. As open-source models democratize entree to foundational capabilities, the quality to fine-tune for circumstantial usage cases volition progressively specify merchandise excellence.

- Feedback loops tin make continuous improvement: As foundational models go progressively commoditized, the semipermanent defensibility of AI products volition not travel from the exemplary alone, but from however efficaciously those models larn from usage. The signals loop—powered by real-world idiosyncratic interactions and fine-tuning—enables teams to present high-performing experiences that continuously amended implicit time.

- Companies indispensable germinate to enactment iteration astatine scale, and velocity volition beryllium key: Building a strategy that supports predominant exemplary updates requires adjusting information pipelines, fine-tuning, valuation loops, and squad workflows. Companies’ engineering and merchandise orgs indispensable align astir accelerated iteration and fine-tuning, telemetry analysis, synthetic information generation, and automated valuation frameworks to support up with idiosyncratic needs and exemplary capabilities. Organizations that germinate their systems and tools to rapidly incorporated signals—from telemetry to quality feedback—will beryllium champion positioned to lead. Azure AI Foundry provides the indispensable components needed to facilitate this continuous exemplary and merchandise improvement.

- Agents necessitate intentional plan and continuous adaptation: Building agents goes beyond exemplary selection. It demands thoughtful orchestration of memory, reasoning, and feedback mechanisms. Signals loops alteration agents to germinate from reactive assistants into proactive co-workers that larn from interactions and amended implicit time. Azure AI Foundry provides the infrastructure to enactment this evolution, helping teams plan agents that act, accommodate dynamically, and present sustained value.

While successful the aboriginal days of AI fine-tuning was not economical and required tons of clip and effort, the emergence of open-source frontier models and methods similar LoRA and distillation person made tuning much cost-effective, and tools person go easier to use. As a result, fine-tuning is much accessible to much organizations than ever before. While out-of-the-box models person a relation to play for horizontal workloads similar cognition hunt oregon lawsuit service, organizations are progressively experimenting with fine-tuning for manufacture and domain-specific scenarios, adding their domain-specific information to their products and models.

The signals loop ‘future proofs’ AI investments by enabling models to continuously amended implicit clip arsenic usage information is fed backmost into the fine-tuned model, preventing stagnated performance.

Build adaptive AI experiences with Azure AI Foundry

To simplify the implementation of fine-tuning feedback loops, Azure AI Foundry offers industry-leading fine-tuning capabilities done a unified level that streamlines the full AI lifecycle—from exemplary enactment to deployment—while embedding enterprise-grade compliance and governance. This empowers teams to build, adapt, and standard AI solutions with assurance and control.

Here are 4 cardinal reasons wherefore fine-tuning connected Azure AI Foundry stands out:

- Model choice: Access a wide portfolio of unfastened and proprietary models from starring providers, with the flexibility to take betwixt serverless oregon managed compute options.

- Reliability: Rely connected 99.9% availability for Azure OpenAI models and payment from latency guarantees with provisioned throughput units (PTUs).

- Unified platform: Leverage an end-to-end situation that brings unneurotic models, training, evaluation, deployment, and show metrics—all successful 1 place.

- Scalability: Start tiny with a cost-effective Developer Tier for experimentation and seamlessly standard to accumulation workloads utilizing PTUs.

Join america successful gathering the aboriginal of AI, wherever copilots go co-workers, and workflows go self-improving engines of productivity.

Learn more

- Register for Ignite’s AI fine-tuning successful Azure AI Foundry to marque your agents unstoppable.

- Download the achromatic paper: Learn however to unlock business-value with fine-tuning.

- Explore fine-tuning with Azure AI Foundry documentation.

5 months ago

94

5 months ago

94