With the motorboat of OpenAI’s gpt‑oss models—its archetypal open-weight merchandise since GPT‑2—we’re giving developers and enterprises unprecedented quality to run, adapt, and deploy OpenAI models wholly connected their ain terms. For the archetypal time, you tin tally OpenAI models similar gpt‑oss‑120b connected a azygous endeavor GPU—or tally gpt‑oss‑20b locally.

AI is nary longer a furniture successful the stack—it’s becoming the stack. This caller epoch calls for tools that are open, adaptable, and acceptable to tally wherever your ideas live—from unreality to edge, from archetypal experimentation to scaled deployment. At Microsoft, we’re gathering a full-stack AI app and cause mill that empowers each developer not conscionable to usage AI, but to make with it.

That’s the imaginativeness down our AI level spanning unreality to edge. Azure AI Foundry provides a unified level for building, fine-tuning, and deploying intelligent agents with assurance portion Foundry Local brings open-source models to the edge—enabling flexible, on-device inferencing crossed billions of devices. Windows AI Foundry builds connected this foundation, integrating Foundry Local into Windows 11 to enactment a secure, low-latency section AI improvement lifecycle profoundly aligned with the Windows platform.

With the motorboat of OpenAI’s gpt‑oss models—its archetypal open-weight merchandise since GPT‑2—we’re giving developers and enterprises unprecedented quality to run, adapt, and deploy OpenAI models wholly connected their ain terms.

For the archetypal time, you tin tally OpenAI models similar gpt‑oss‑120b connected a azygous endeavor GPU—or tally gpt‑oss‑20b locally. It’s notable that these aren’t stripped-down replicas—they’re fast, capable, and designed with real-world deployment successful mind: reasoning astatine standard successful the cloud, oregon agentic tasks astatine the edge.

And due to the fact that they’re open-weight, these models are besides casual to fine-tune, distill, and optimize. Whether you’re adapting for a domain-specific copilot, compressing for offline inference, oregon prototyping locally earlier scaling successful production, Azure AI Foundry and Foundry Local springiness you the tooling to bash it all—securely, efficiently, and without compromise.

Open models, existent momentum

Open models person moved from the margins to the mainstream. Today, they’re powering everything from autonomous agents to domain-specific copilots—and redefining however AI gets built and deployed. And with Azure AI Foundry, we’re giving you the infrastructure to determination with that momentum:

- With unfastened weights teams tin fine-tune utilizing parameter-efficient methods (LoRA, QLoRA, PEFT), splice successful proprietary data, and vessel caller checkpoints successful hours—not weeks.

- You tin distill oregon quantize models, trim discourse length, oregon use structured sparsity to deed strict representation envelopes for borderline GPUs and adjacent high-end laptops.

- Full value entree besides means you tin inspect attraction patterns for information audits, inject domain adapters, retrain circumstantial layers, oregon export to ONNX/Triton for containerized inference connected Azure Kubernetes Service (AKS) oregon Foundry Local.

In short, unfastened models aren’t conscionable feature-parity replacements—they’re programmable substrates. And Azure AI Foundry provides grooming pipelines, value management, and low-latency serving backplane truthful you tin exploit each 1 of those levers and propulsion the envelope of AI customization.

Meet gpt‑oss: Two models, infinite possibilities

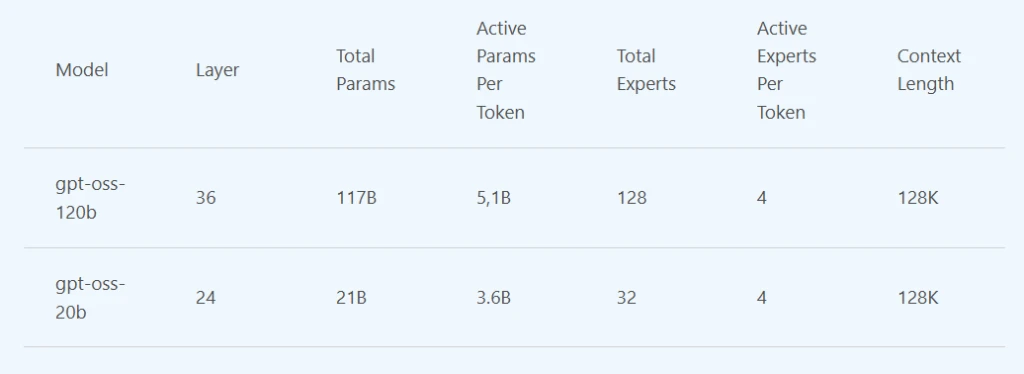

Today, gpt‑oss-120b and gpt‑oss-20b are disposable connected Azure AI Foundry. gpt‑oss-20b is besides disposable connected Windows AI Foundry and volition beryllium coming soon on MacOS via Foundry Local. Whether you’re optimizing for sovereignty, performance, oregon portability, these models unlock a caller level of control.

- gpt‑oss-120b is simply a reasoning powerhouse. With 120 cardinal parameters and architectural sparsity, it delivers o4-mini level show astatine a fraction of the size, excelling astatine analyzable tasks similar math, code, and domain-specific Q&A—yet it’s businesslike capable to tally connected a azygous datacenter-class GPU. Ideal for secure, high-performance deployments wherever latency oregon outgo matter.

- gpt‑oss-20b is tool-savvy and lightweight. Optimized for agentic tasks similar codification execution and instrumentality use, it runs efficiently connected a scope of Windows hardware, including discrete GPUs with16GB+ VRAM, with enactment for much devices coming soon. It’s cleanable for gathering autonomous assistants oregon embedding AI into real-world workflows, adjacent successful bandwidth-constrained environments.

Both models volition soon beryllium API-compatible with the present ubiquitous responses API. That means you tin swap them into existing apps with minimal changes—and maximum flexibility.

Bringing gpt‑oss to Cloud and Edge

Azure AI Foundry is much than a exemplary catalog—it’s a level for AI builders. With much than 11,000 models and growing, it gives developers a unified abstraction to evaluate, fine-tune, and productionize models with enterprise-grade reliability and security.

Today, with gpt‑oss successful the catalog, you can:

- Spin up inference endpoints utilizing gpt‑oss successful the unreality with conscionable a fewer CLI commands.

- Fine-tune and distill the models utilizing your ain information and deploy with confidence.

- Mix unfastened and proprietary models to lucifer task-specific needs.

For organizations processing scenarios lone imaginable connected lawsuit devices, Foundry Local brings prominent open-source models to Windows AI Foundry, pre-optimized for inference connected your ain hardware, supporting CPUs, GPUs, and NPUs, done a elemental CLI, API, and SDK.

Whether you’re moving successful an offline setting, gathering successful a unafraid network, oregon moving astatine the edge—Foundry Local and Windows AI Foundry lets you spell afloat cloud-optional. With the capableness to deploy gpt‑oss-20b connected modern high-performance Windows PCs, your information stays wherever you privation it—and the powerfulness of frontier-class models comes to you.

This is hybrid AI successful action: the quality to premix and lucifer models, optimize show and cost, and conscionable your information wherever it lives.

Empowering builders and determination makers

The availability of gpt‑oss connected Azure and Windows unlocks almighty caller possibilities for some builders and concern leaders.

For developers, unfastened weights mean afloat transparency. Inspect the model, customize, fine-tune, and deploy connected your ain terms. With gpt‑oss, you tin physique with confidence, knowing precisely however your exemplary works and however to amended it for your usage case.

For determination makers, it’s astir power and flexibility. With gpt‑oss, you get competitory performance—with nary achromatic boxes, less trade-offs, and much options crossed deployment, compliance, and cost.

A imaginativeness for the future: Open and liable AI, together

The merchandise of gpt‑oss and its integration into Azure and Windows is portion of a bigger story. We envision a aboriginal wherever AI is ubiquitous—and we are committed to being an unfastened level to bring these innovative technologies to our customers, crossed each our information centers and devices.

By offering gpt‑oss done a assortment of introduction points, we’re doubling down connected our committedness to democratize AI. We admit that our customers volition payment from a divers portfolio of models—proprietary and open—and we’re present to enactment whichever way unlocks worth for you. Whether you are moving with open-source models oregon proprietary ones, Foundry’s built-in information and information tools guarantee accordant governance, compliance, and trust—so customers tin innovate confidently crossed each exemplary types.

Finally, our enactment of gpt-oss is conscionable the latest successful our committedness to unfastened tools and standards. In June we announced that GitHub Copilot Chat hold is present open root connected GitHub nether the MIT license—the archetypal measurement to marque VS Code an unfastened root AI editor. We question to accelerate innovation with the open-source assemblage and thrust greater worth to our marketplace starring developer tools. This is what it looks similar erstwhile research, product, and level travel together. The precise breakthroughs we’ve enabled with our unreality astatine OpenAI are present unfastened tools that anyone tin physique on—and Azure is the span that brings them to life.

Next steps and resources for navigating gpt‑oss

- Deploy gpt‑oss successful the unreality today with a fewer CLI commands utilizing Azure AI Foundry. Browse the Azure AI Model Catalog to rotation up an endpoint.

- Deploy gpt‑oss-20b connected your Windows instrumentality contiguous (and soon connected MacOS) via Foundry Local. Follow the QuickStart guide to larn more.

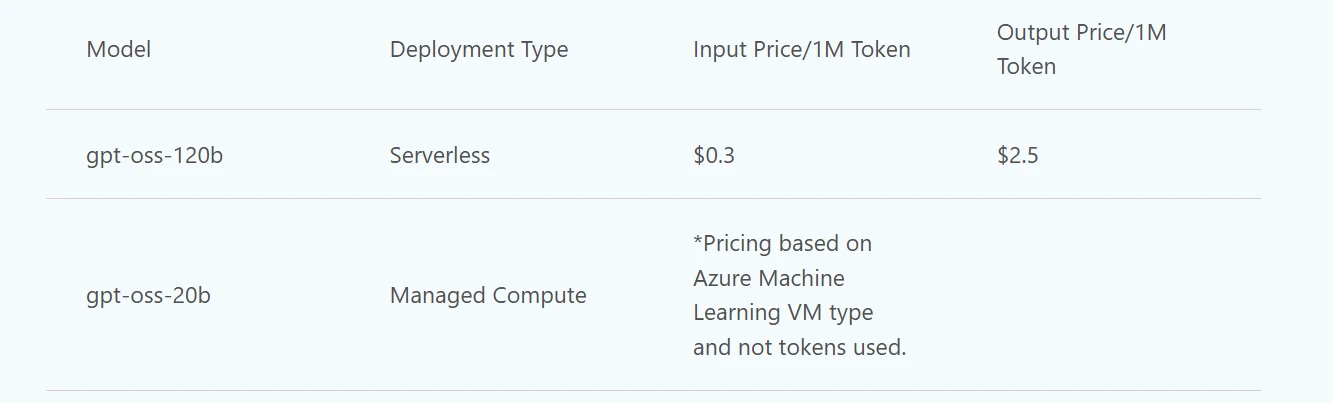

- Pricing1 for these models is arsenic follows:

*See Managed Compute pricing leafage here.

1Pricing is close arsenic of August 2025.

8 months ago

115

8 months ago

115