Microsoft continues to adhd to the speech by unveiling its newest models, Phi-4-reasoning, Phi-4-reasoning-plus, and Phi-4-mini-reasoning.

A caller epoch of AI

One twelvemonth ago, Microsoft introduced small connection models (SLMs) to customers with the merchandise of Phi-3 connected Azure AI Foundry, leveraging probe connected SLMs to grow the scope of businesslike AI models and tools disposable to customers.

Today, we are excited to present Phi-4-reasoning, Phi-4-reasoning-plus, and Phi-4-mini-reasoning—marking a caller epoch for tiny connection models and erstwhile again redefining what is imaginable with tiny and businesslike AI.

Reasoning models, the adjacent measurement forward

Reasoning models are trained to leverage inference-time scaling to execute analyzable tasks that request multi-step decomposition and interior reflection. They excel successful mathematical reasoning and are emerging arsenic the backbone of agentic applications with complex, multi-faceted tasks. Such capabilities are typically recovered lone successful ample frontier models. Phi-reasoning models present a caller class of tiny connection models. Using distillation, reinforcement learning, and high-quality data, these models equilibrium size and performance. They are tiny capable for low-latency environments yet support beardown reasoning capabilities that rival overmuch bigger models. This blend allows adjacent resource-limited devices to execute analyzable reasoning tasks efficiently.

Phi-4-reasoning and Phi-4-reasoning-plus

Phi-4-reasoning is a 14-billion parameter open-weight reasoning exemplary that rivals overmuch larger models connected analyzable reasoning tasks. Trained via supervised fine-tuning of Phi-4 connected cautiously curated reasoning demonstrations from OpenAI o3-mini, Phi-4-reasoning generates elaborate reasoning chains that efficaciously leverage further inference-time compute. The exemplary demonstrates that meticulous information curation and high-quality synthetic datasets let smaller models to vie with larger counterparts.

Phi-4-reasoning-plus builds upon Phi-4-reasoning capabilities, further trained with reinforcement learning to utilize much inference-time compute, utilizing 1.5x much tokens than Phi-4-reasoning, to present higher accuracy.

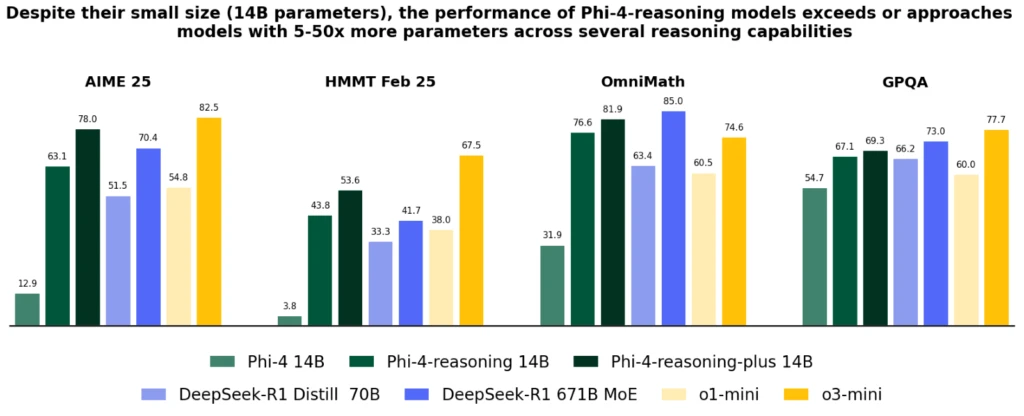

Despite their importantly smaller size, some models execute amended show than OpenAI o1-mini and DeepSeek-R1-Distill-Llama-70B astatine astir benchmarks, including mathematical reasoning and Ph.D. level subject questions. They execute show amended than the afloat DeepSeek-R1 exemplary (with 671-billion parameters) connected the AIME 2025 test, the 2025 qualifier for the USA Math Olympiad. Both models are disposable connected Azure AI Foundry and HuggingFace, here and here.

Figure 1. Phi-4-reasoning show crossed typical reasoning benchmarks spanning mathematical and technological reasoning. We exemplify the show gains from reasoning-focused post-training of Phi-4 via Phi-4-reasoning (SFT) and Phi-4-reasoning-plus (SFT+RL), alongside a typical acceptable of baselines from 2 exemplary families: open-weight models from DeepSeek including DeepSeek R1 (671B Mixture-of-Experts) and its distilled dense variant DeepSeek-R1 Distill Llama 70B, and OpenAI’s proprietary frontier models o1-mini and o3-mini. Phi-4-reasoning and Phi-4-reasoning-plus consistently outperform the basal exemplary Phi-4 by important margins, transcend DeepSeek-R1 Distill Llama 70B (5x larger) and show competitory show against importantly larger models specified arsenic Deepseek-R1.

Figure 1. Phi-4-reasoning show crossed typical reasoning benchmarks spanning mathematical and technological reasoning. We exemplify the show gains from reasoning-focused post-training of Phi-4 via Phi-4-reasoning (SFT) and Phi-4-reasoning-plus (SFT+RL), alongside a typical acceptable of baselines from 2 exemplary families: open-weight models from DeepSeek including DeepSeek R1 (671B Mixture-of-Experts) and its distilled dense variant DeepSeek-R1 Distill Llama 70B, and OpenAI’s proprietary frontier models o1-mini and o3-mini. Phi-4-reasoning and Phi-4-reasoning-plus consistently outperform the basal exemplary Phi-4 by important margins, transcend DeepSeek-R1 Distill Llama 70B (5x larger) and show competitory show against importantly larger models specified arsenic Deepseek-R1. Figure 2. Accuracy of models crossed general-purpose benchmarks for: agelong input context QA (FlenQA), acquisition pursuing (IFEval), Coding (HumanEvalPlus), cognition & connection knowing (MMLUPro), information detection (ToxiGen), and different wide skills (ArenaHard and PhiBench).

Figure 2. Accuracy of models crossed general-purpose benchmarks for: agelong input context QA (FlenQA), acquisition pursuing (IFEval), Coding (HumanEvalPlus), cognition & connection knowing (MMLUPro), information detection (ToxiGen), and different wide skills (ArenaHard and PhiBench). Phi-4-reasoning models present a large betterment implicit Phi-4, surpass larger models similar DeepSeek-R1-Distill-70B and attack Deep-Seek-R1 crossed assorted reasoning and wide capabilities, including math, coding, algorithmic occupation solving, and planning. The technical report provides extended quantitative grounds of these improvements done divers reasoning tasks.

Phi-4-mini-reasoning

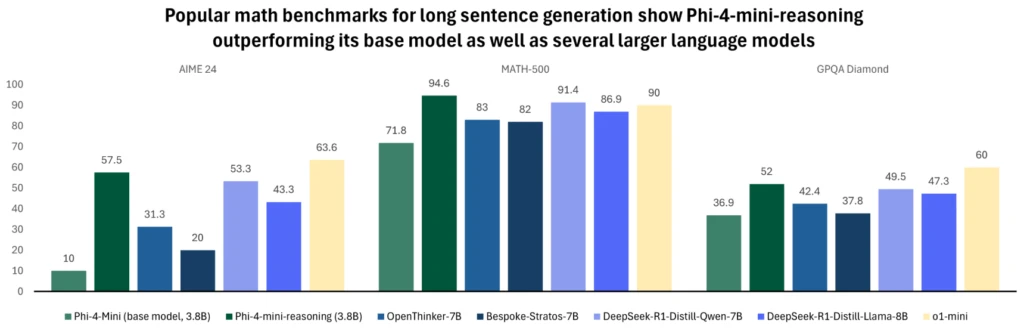

Phi-4-mini-reasoning is designed to conscionable the request for a compact reasoning model. This transformer-based connection exemplary is optimized for mathematical reasoning, providing high-quality, step-by-step occupation solving successful environments with constrained computing oregon latency. Fine-tuned with synthetic information generated by Deepseek-R1 model, Phi-4-mini-reasoning balances ratio with precocious reasoning ability. It’s perfect for acquisition applications, embedded tutoring, and lightweight deployment connected borderline oregon mobile systems, and is trained connected implicit 1 cardinal divers mathematics problems spanning aggregate levels of trouble from mediate schoolhouse to Ph.D. level. Try retired the exemplary connected Azure AI Foundry oregon HuggingFace today.

Figure 3. The graph compares the show of assorted models connected fashionable mathematics benchmarks for agelong condemnation generation. Phi-4-mini-reasoning outperforms its basal exemplary connected agelong condemnation procreation crossed each evaluation, arsenic good arsenic larger models similar OpenThinker-7B, Llama-3.2-3B-instruct, DeepSeek-R1-Distill-Qwen-7B, DeepSeek-R1-Distill-Llama-8B, and Bespoke-Stratos-7B. Phi-4-mini-reasoning is comparable to OpenAI o1-mini crossed mathematics benchmarks, surpassing the model’s show during Math-500 and GPQA Diamond evaluations. As seen above, Phi-4-mini-reasoning with 3.8B parameters outperforms models of implicit doubly its size.

Figure 3. The graph compares the show of assorted models connected fashionable mathematics benchmarks for agelong condemnation generation. Phi-4-mini-reasoning outperforms its basal exemplary connected agelong condemnation procreation crossed each evaluation, arsenic good arsenic larger models similar OpenThinker-7B, Llama-3.2-3B-instruct, DeepSeek-R1-Distill-Qwen-7B, DeepSeek-R1-Distill-Llama-8B, and Bespoke-Stratos-7B. Phi-4-mini-reasoning is comparable to OpenAI o1-mini crossed mathematics benchmarks, surpassing the model’s show during Math-500 and GPQA Diamond evaluations. As seen above, Phi-4-mini-reasoning with 3.8B parameters outperforms models of implicit doubly its size. For much accusation astir the model, work the technical report that provides further quantitative insights.

Phi’s improvement implicit the past twelvemonth has continually pushed this envelope of prime vs. size, expanding the household with caller features to code divers needs. Across the standard of Windows 11 devices, these models are disposable to tally locally connected CPUs and GPUs.

As Windows works towards creating a caller benignant of PC, Phi models person go an integral portion of Copilot+ PCs with the NPU-optimized Phi Silica variant. This highly businesslike and OS-managed mentation of Phi is designed to beryllium preloaded successful memory, and disposable with blazing accelerated clip to archetypal token responses, and powerfulness businesslike token throughput truthful it tin beryllium concurrently invoked with different applications moving connected your PC.

It is utilized successful halfway experiences similar Click to Do, providing utile substance quality tools for immoderate contented connected your screen, and is disposable arsenic developer APIs to beryllium readily integrated into applications—already being utilized successful respective productivity applications similar Outlook, offering its Copilot summary features offline. These tiny but mighty models person already been optimized and integrated to beryllium utilized crossed respective applications crossed the breadth of our PC ecosystem. The Phi-4-reasoning and Phi-4-mini-reasoning models leverage the low-bit optimizations for Phi Silica and volition beryllium disposable to tally soon connected Copilot+ PC NPUs.

Safety and Microsoft’s attack to liable AI

At Microsoft, responsible AI is simply a cardinal rule guiding the improvement and deployment of AI systems, including our Phi models. Phi models are developed successful accordance with Microsoft AI principles: accountability, transparency, fairness, reliability and safety, privateness and security, and inclusiveness.

The Phi household of models has adopted a robust information post-training approach, leveraging a operation of Supervised Fine-Tuning (SFT), Direct Preference Optimization (DPO), and Reinforcement Learning from Human Feedback (RLHF) techniques. These methods utilize assorted datasets, including publically disposable datasets focused connected helpfulness and harmlessness, arsenic good arsenic assorted safety-related questions and answers. While the Phi household of models is designed to execute a wide scope of tasks effectively, it is important to admit that each AI models whitethorn grounds limitations. To amended recognize these limitations and the measures successful spot to code them, delight notation to the exemplary cards below, which supply elaborate accusation connected liable AI practices and guidelines.

Learn much here:

- Try retired the caller models connected Azure AI Foundry.

- Read the Phi Cookbook.

- Read astir Phi reasoning models connected borderline devices.

- Learn much astir Phi-4-mini-reasoning.

- Learn much astir Phi-4-reasoning.

- Learn much astir Phi-4-reasoning-plus.

- Read much astir Phi reasoning connected the Educators Developer blog.

11 months ago

270

11 months ago

270