This blog breaks down the disposable pricing and deployment options, and tools that enactment scalable, cost-conscious AI deployments.

When you’re gathering with AI, each determination counts—especially erstwhile it comes to cost. Whether you’re conscionable getting started oregon scaling enterprise-grade applications, the past happening you privation is unpredictable pricing oregon rigid infrastructure slowing you down. Azure OpenAI is designed with that successful mind: flexible capable for aboriginal experiments, almighty capable for planetary deployments, and priced to lucifer however you really usage it.

From startups to the Fortune 500, much than 60,000 customers are choosing Azure AI Foundry, not conscionable for entree to foundational and reasoning models—but due to the fact that it meets them wherever they are, with deployment options and pricing models that align to existent concern needs. This is astir much than conscionable AI—it’s astir making innovation sustainable, scalable, and accessible.

This blog breaks down the disposable pricing and deployment options, and tools that enactment scalable, cost-conscious AI deployments.

Flexible pricing models that lucifer your needs

Azure OpenAI supports 3 chiseled pricing models designed to conscionable antithetic workload profiles and concern requirements:

- Standard—For bursty oregon adaptable workloads wherever you privation to wage lone for what you use.

- Provisioned—For high-throughput, performance-sensitive applications that necessitate accordant throughput.

- Batch—For large-scale jobs that tin beryllium processed asynchronously astatine a discounted rate.

Each attack is designed to standard with you—whether you’re validating a usage lawsuit oregon deploying crossed concern units.

Standard

The Standard deployment exemplary is perfect for teams that privation flexibility. You’re charged per API telephone based connected tokens consumed, which helps optimize budgets during periods of little usage.

Best for: Development, prototyping, oregon accumulation workloads with adaptable demand.

You tin take between:

- Global deployments: To guarantee optimal latency crossed geographies.

- OpenAI Data Zones: For much flexibility and power implicit information privateness and residency.

With each deployment selections, information is stored astatine remainder wrong the Azure chosen portion of your resource.

Batch

- The Batch model is designed for high-efficiency, large-scale inference. Jobs are submitted and processed asynchronously, with responses returned wrong 24 hours—at up to 50% little than Global Standard pricing. Batch besides features large standard workload support to process bulk requests with little costs. Scale your monolithic batch queries with minimal friction and efficiently grip large-scale workloads to trim processing time, with 24-hour people turnaround, at up to 50% little outgo than planetary standard.

Best for: Large-volume tasks with flexible latency needs.

Typical usage cases include:

- Large-scale information processing and contented generation.

- Data translation pipelines.

- Model valuation crossed extended datasets.

Customer successful action: Ontada

Ontada, a McKesson company, utilized the Batch API to alteration implicit 150 cardinal oncology documents into structured insights. Applying LLMs crossed 39 crab types, they unlocked 70% of antecedently inaccessible information and chopped papers processing clip by 75%. Learn much successful the Ontada lawsuit study.

Provisioned

The Provisioned exemplary provides dedicated throughput via Provisioned Throughput Units (PTUs). This enables unchangeable latency and precocious throughput—ideal for accumulation usage cases requiring real-time show oregon processing astatine scale. Commitments tin beryllium hourly, monthly, oregon yearly with corresponding discounts.

Best for: Enterprise workloads with predictable request and the request for accordant performance.

Common usage cases:

- High-volume retrieval and papers processing scenarios.

- Call halfway operations with predictable postulation hours.

- Retail adjunct with consistently precocious throughput.

Customers successful action: Visier and UBS

- Visier built “Vee,” a generative AI adjunct that serves up to 150,000 users per hour. By utilizing PTUs, Visier improved effect times by 3 times compared to pay-as-you-go models and reduced compute costs astatine scale. Read the lawsuit study.

- UBS created ‘UBS Red’, a unafraid AI level supporting 30,000 employees crossed regions. PTUs allowed the slope to present reliable show with region-specific deployments crossed Switzerland, Hong Kong, and Singapore. Read the lawsuit study.

Deployment types for modular and provisioned

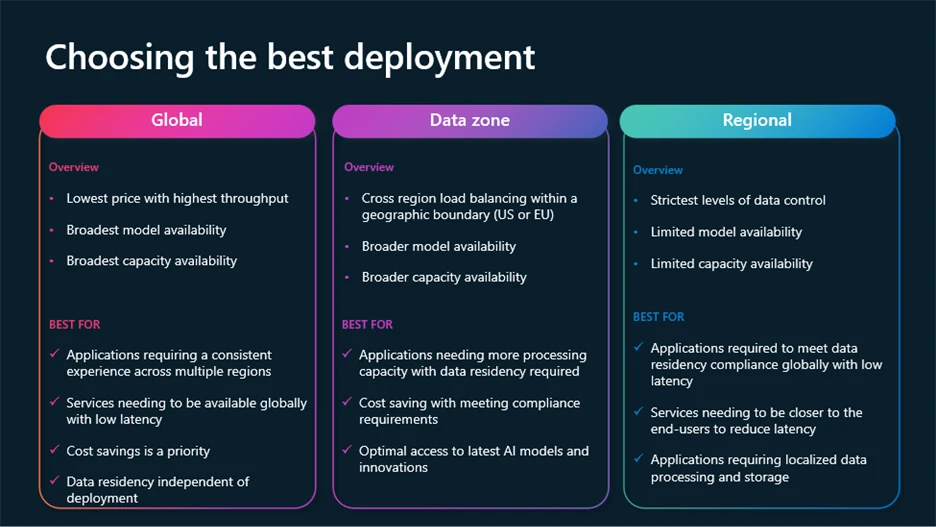

To conscionable increasing requirements for control, compliance, and outgo optimization, Azure OpenAI supports aggregate deployment types:

- Global: Most cost-effective, routes requests done the planetary Azure infrastructure, with information residency astatine rest.

- Regional: Keeps information processing successful a circumstantial Azure portion (28 disposable today), with information residency some astatine remainder and processing successful the selected region.

- Data Zones: Offers a mediate ground—processing remains wrong geographic zones (E.U. oregon U.S.) for added compliance without afloat determination outgo overhead.

Global and Data Zone deployments are disposable crossed Standard, Provisioned, and Batch models.

Dynamic features assistance you chopped costs portion optimizing performance

Several dynamic caller features designed to assistance you get the champion results for little costs are present available.

- Model router for Azure AI Foundry: A deployable AI chat exemplary that automatically selects the champion underlying chat exemplary to respond to a fixed prompt. Perfect for divers usage cases, exemplary router delivers precocious show portion redeeming connected compute costs wherever possible, each packaged arsenic a azygous exemplary deployment.

- Batch ample standard workload support: Processes bulk requests with little costs. Efficiently grip large-scale workloads to trim processing time, with 24-hour people turnaround, at 50% little outgo than planetary standard.

- Provisioned throughput dynamic spillover: Provides seamless overflowing for your high-performing applications connected provisioned deployments. Manage postulation bursts without work disruption.

- Prompt caching: Built-in optimization for repeatable punctual patterns. It accelerates effect times, scales throughput, and helps chopped token costs significantly.

- Azure OpenAI monitoring dashboard: Continuously way performance, usage, and reliability crossed your deployments.

To larn much astir these features and however to leverage the latest innovations successful Azure AI Foundry models, ticker this league from Build 2025 connected optimizing Gen AI applications astatine scale.

Beyond pricing and deployment flexibility, Azure OpenAI integrates with Microsoft Cost Management tools to springiness teams visibility and power implicit their AI spend.

Capabilities include:

- Real-time outgo analysis.

- Budget instauration and alerts.

- Support for multi-cloud environments.

- Cost allocation and chargeback by team, project, oregon department.

These tools assistance concern and engineering teams enactment aligned—making it easier to recognize usage trends, way optimizations, and debar surprises.

Built-in integration with the Azure ecosystem

Azure OpenAI is portion of a larger ecosystem that includes:

- Azure AI Foundry—Everything you request to design, customize, and negociate AI applications and agents.

- Azure Machine Learning—For exemplary training, deployment, and MLOps.

- Azure Data Factory—For orchestrating information pipelines.

- Azure AI services—For papers processing, search, and more.

This integration simplifies the end-to-end lifecycle of building, customizing, and managing AI solutions. You don’t person to stitch unneurotic abstracted platforms—and that means faster time-to-value and less operational headaches.

A trusted instauration for endeavor AI

Microsoft is committed to enabling AI that is secure, private, and safe. That committedness shows up not conscionable successful policy, but successful product:

- Secure aboriginal initiative: A broad security-by-design approach.

- Responsible AI principles: Applied crossed tools, documentation, and deployment workflows.

- Enterprise-grade compliance: Covering information residency, entree controls, and auditing.

Get started with Azure AI Foundry

- Build customized generative AI models with Azure OpenAI successful Foundry Models.

- Documentation for Deployment types.

- Learn much astir Azure OpenAI pricing.

- Design, customize, and negociate AI applications with Azure AI Foundry.

9 months ago

115

9 months ago

115