For early-stage open-source projects, the “Getting started” usher is often the archetypal existent enactment a developer has with the project. If a bid fails, an output doesn’t match, oregon a measurement is unclear, astir users won’t record a bug report, they volition conscionable determination on.

Drasi, a CNCF sandbox task that detects changes successful your information and triggers contiguous reactions, is supported by our tiny squad of 4 engineers successful Microsoft Azure’s Office of the Chief Technology Officer. We person broad tutorials, but we are shipping codification faster than we tin manually trial them.

The squad didn’t recognize however large this spread was until precocious 2025, erstwhile GitHub updated its Dev Container infrastructure, bumping the minimum Docker version. The update broke the Docker daemon connection, and each azygous tutorial stopped working. Because we relied connected manual testing, we didn’t instantly cognize the grade of the damage. Any developer trying Drasi during that model would person deed a wall.

This incidental forced a realization: with precocious AI coding assistants, documentation investigating tin beryllium converted to a monitoring problem.

The problem: Why does documentation break?

Documentation usually breaks for 2 reasons:

1. The curse of knowledge

Experienced developers constitute documentation with implicit context. When we constitute “wait for the query to bootstrap,” we cognize to tally `drasi database query` and ticker for the `Running` status, oregon adjacent amended to tally the `drasi wait` command. A caller idiosyncratic has nary specified context. Neither does an AI agent. They work the instructions virtually and don’t cognize what to do. They get stuck connected the “how,” portion we lone papers the “what.”

2. Silent drift

Documentation doesn’t neglect loudly similar codification does. When you rename a configuration record successful your codebase, the physique fails immediately. But erstwhile your documentation inactive references the aged filename, thing happens. The drift accumulates silently until a idiosyncratic reports confusion.

This is compounded for tutorials similar ours, which rotation up sandbox environments with Docker, k3d, and illustration databases. When immoderate upstream dependency changes—a deprecated flag, a bumped version, oregon a caller default—our tutorials tin interruption silently.

The solution: Agents arsenic synthetic users

To lick this, we treated tutorial investigating arsenic a simulation problem. We built an AI cause that acts arsenic a “synthetic caller user.”

This cause has 3 captious characteristics:

- It is naïve: It has nary anterior cognition of Drasi—it knows lone what is explicitly written successful the tutorial.

- It is literal: It executes each bid precisely arsenic written. If a measurement is missing, it fails.

- It is unforgiving: It verifies each expected output. If the doc says, “You should spot ‘Success’”, and the bid enactment interface (CLI) conscionable returns silently, the cause flags it and fails fast.

The stack: GitHub Copilot CLI and Dev Containers

We built a solution utilizing GitHub Actions, Dev Containers, Playwright, and the GitHub Copilot CLI.

Our tutorials necessitate dense infrastructure:

- A afloat Kubernetes clump (k3d)

- Docker-in-Docker

- Real databases (such arsenic PostgreSQL and MySQL)

We needed an situation that precisely matches what our quality users experience. If users tally successful a circumstantial Dev Container connected GitHub Codespaces, our trial indispensable tally successful that same Dev Container.

The architecture

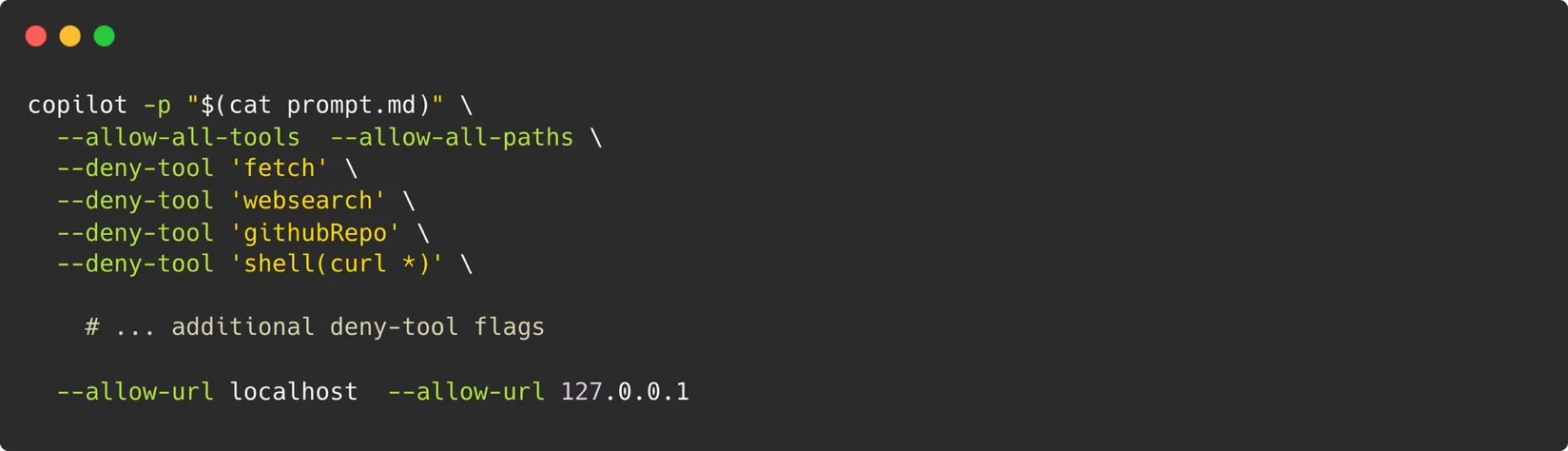

Inside the container, we invoke the Copilot CLI with a specialized strategy punctual (view the afloat punctual here):

This punctual utilizing the punctual mode (-p) of the CLI cause gives america an cause that tin execute terminal commands, constitute files, and tally browser scripts—just similar a quality developer sitting astatine their terminal. For the cause to simulate a existent user, it needs these capabilities.

To alteration the agents to unfastened webpages and interact with them arsenic immoderate quality pursuing the tutorial steps would, we besides instal Playwright connected the Dev Container. The cause besides takes screenshots which it past compares against those provided successful the documentation.

Security model

Our information exemplary is built astir 1 principle: the instrumentality is the boundary.

Rather than trying to restrict idiosyncratic commands (a losing crippled erstwhile the cause needs to tally arbitrary node scripts for Playwright), we dainty the full Dev Container arsenic an isolated sandbox and power what crosses its boundaries: nary outbound web entree beyond localhost, a Personal Access Token (PAT) with lone “Copilot Requests” permission, ephemeral containers destroyed aft each run, and a maintainer-approval gross for triggering workflows.

Dealing with non-determinism

One of the biggest challenges with AI-based investigating is non-determinism. Large connection models (LLMs) are probabilistic—sometimes the cause retries a command; different times it gives up.

We handled this with a three-stage retry with exemplary escalation (start with Gemini-Pro, connected nonaccomplishment effort with Claude Opus), semantic examination for screenshots alternatively of pixel-matching, and verification of core-data fields rather than volatile values.

We besides person a list of choky constraints successful our prompts that forestall the cause from going connected a debugging journey, directives to control the operation of the last report, and besides skip directives that archer the cause to bypass optional tutorial sections similar mounting up outer services.

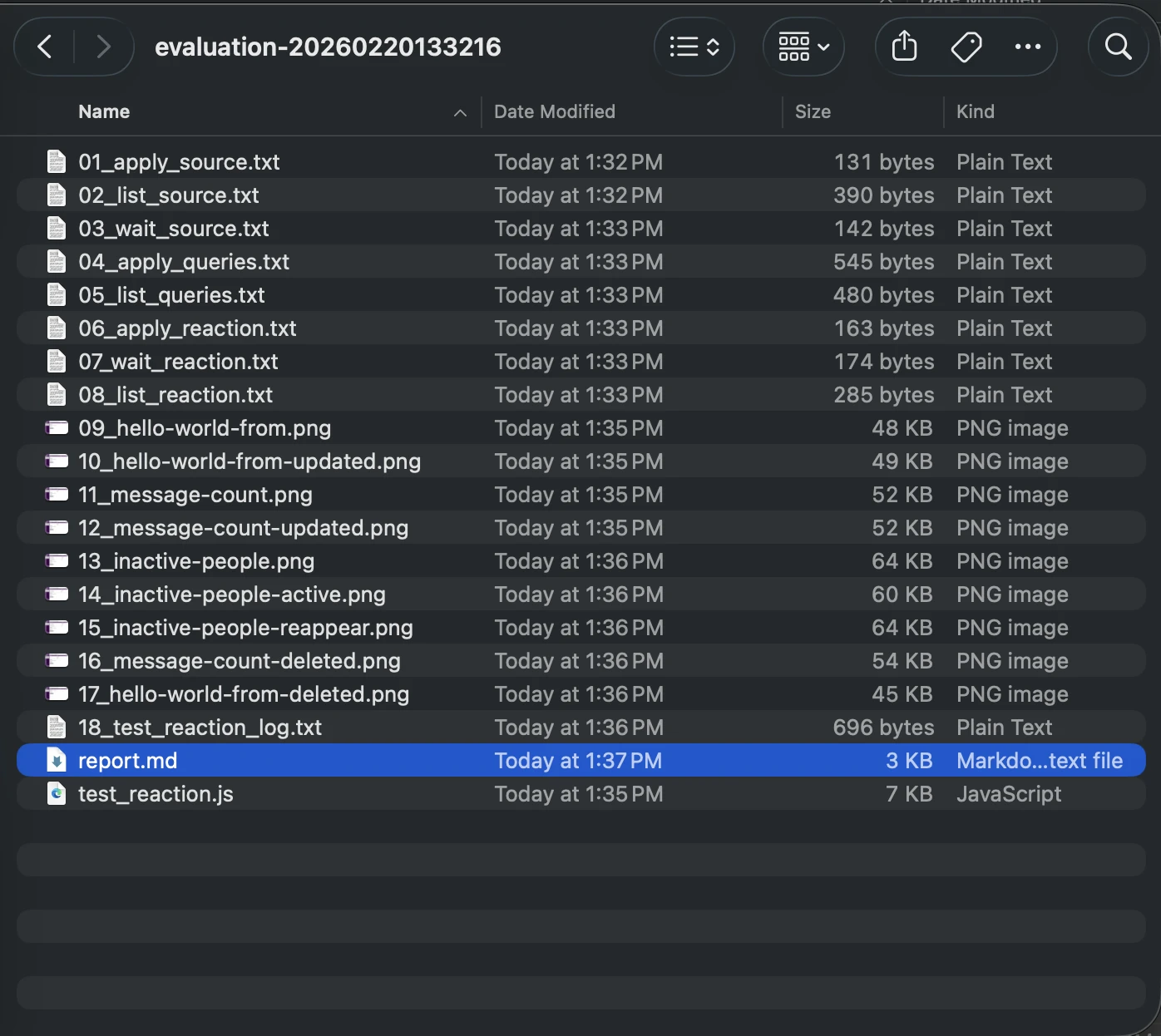

Artifacts for debugging

When a tally fails, we request to cognize why. Since the cause is moving successful a transient container, we can’t conscionable Secure Shell (SSH) successful and look around.

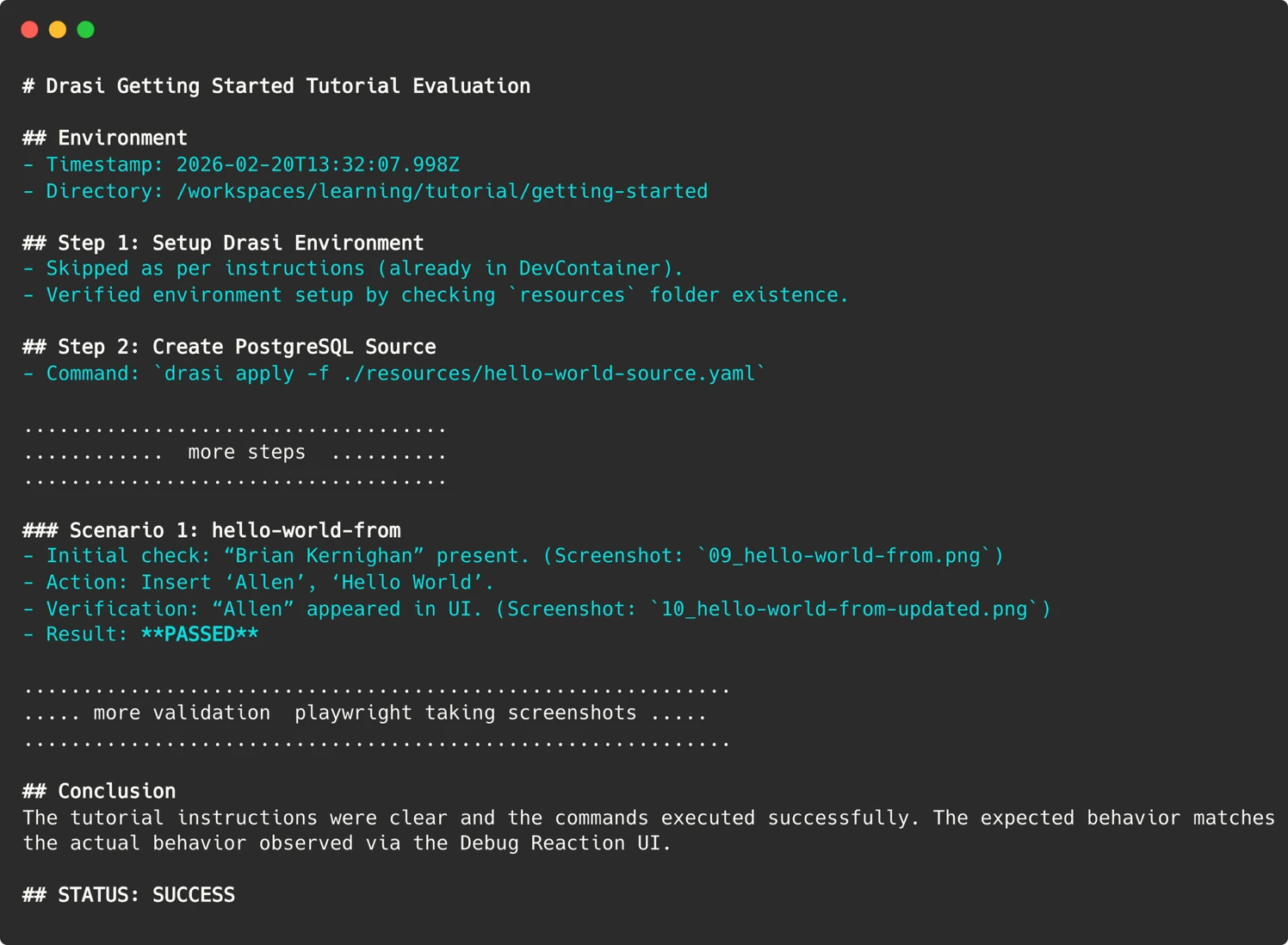

So, our cause preserves grounds of each run, screenshots of web UIs, terminal output of captious commands, and a last markdown study detailing its reasoning similar shown here:

These artifacts are uploaded to the GitHub Action tally summary, allowing america to “time travel” backmost to the nonstop infinitesimal of nonaccomplishment and spot what the cause saw.

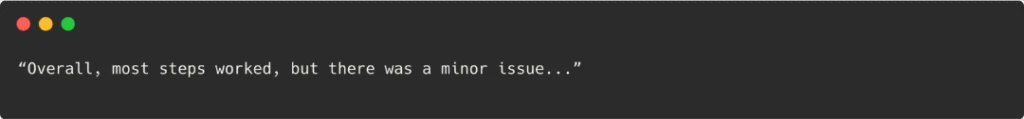

Parsing the agent’s report

With LLMs, getting a definitive “Pass/Fail” awesome that a instrumentality tin recognize tin beryllium challenging. An cause mightiness constitute a long, nuanced decision like:

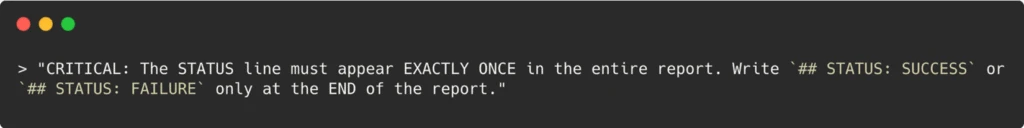

To marque this actionable successful a CI/CD pipeline, we had to bash immoderate punctual engineering. We explicitly instructed the agent:

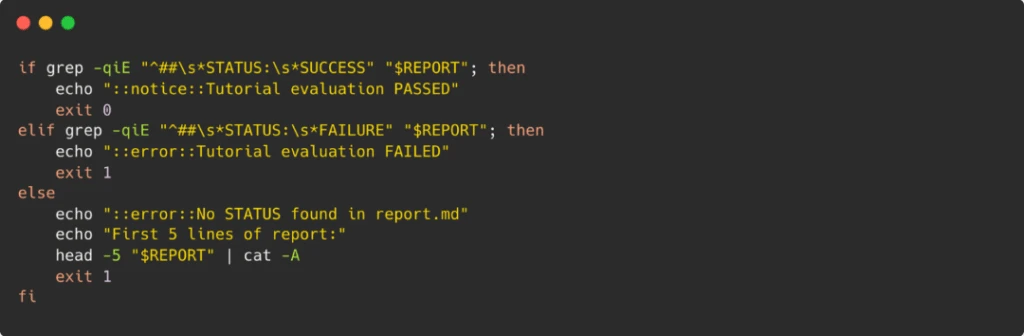

In our GitHub Action, we past simply grep for this circumstantial string to acceptable the exit codification of the workflow.

Simple techniques similar this span the spread betwixt AI’s fuzzy, probabilistic outputs and CI’s binary pass/fail expectations.

Automation

We present person an automated mentation of the workflow which runs weekly. This mentation evaluates each our tutorials each week successful parallel—each tutorial gets its ain sandbox instrumentality and a caller position from the cause acting arsenic a synthetic user. If immoderate of the tutorial valuation fails, the workflow is configured to record an contented connected our GitHub repo.

This workflow tin optionally besides beryllium tally connected pull-requests, but to forestall attacks we person added a maintainer-approval request and a `pull_request_target` trigger, which means that adjacent connected pull-requests by outer contributors, the workflow that executes volition beryllium the 1 successful our main branch.

Running the Copilot CLI requires a PAT token which is stored successful the situation secrets for our repo. To marque definite this does not leak, each tally requires maintainer approval—except the automated play tally which lone runs connected the `main` subdivision of our repo.

What we found: Bugs that matter

Since implementing this system, we person tally implicit 200 “synthetic user” sessions. The cause identified 18 chiseled issues including immoderate superior situation issues and different documentation issues similar these. Fixing them improved the docs for everyone, not conscionable the bot.

- Implicit dependencies: In 1 tutorial, we instructed users to make a passageway to a service. The cause ran the command, and then—following the adjacent instruction—killed the process to tally the adjacent command.

The fix: We realized we hadn’t told the idiosyncratic to support that terminal open. We added a warning: “This bid blocks. Open a caller terminal for consequent steps.” - Missing verification steps: We wrote: “Verify the query is running.” The cause got stuck: “How, exactly?”

The fix: We replaced the vague acquisition with an explicit command: `drasi hold -f query.yaml`. - Format drift: Our CLI output had evolved. New columns were added; older fields were deprecated. The documentation screenshots inactive showed the 2024 mentation of the interface. A quality tester mightiness gloss implicit this (“it looks mostly right”). The cause flagged each mismatch, forcing america to support our examples up to date.

AI arsenic a unit multiplier

We often perceive astir AI replacing humans, but successful this case, the AI is providing america with a workforce we ne'er had.

To replicate what our strategy does—running six tutorials crossed caller environments each week—we would request a dedicated QA assets oregon a important fund for manual testing. For a four-person team, that is impossible. By deploying these Synthetic Users, we person efficaciously hired a tireless QA technologist who works nights, weekends, and holidays.

Our tutorials are present validated play by synthetic users. try the Getting Started usher yourself and spot the results firsthand. And if you’re facing the aforesaid documentation drift successful your ain project, see GitHub Copilot CLI not conscionable arsenic a coding assistant, but arsenic an agent—give it a prompt, a container, and a goal—and fto it bash the enactment a quality doesn’t person clip for.

1 week ago

7

1 week ago

7