Ensuring the reliability, safety, and show of AI agents is critical. That’s wherever cause observability comes in.

This blog station is the 3rd retired of a six-part blog bid called Agent Factory which volition stock champion practices, plan patterns, and tools to assistance usher you done adopting and gathering agentic AI.

Seeing is knowing—the powerfulness of cause observability

As agentic AI becomes much cardinal to endeavor workflows, ensuring reliability, safety, and show is critical. That’s wherever cause observability comes in. Agent observability empowers teams to:

- Detect and resoluteness issues aboriginal successful development.

- Verify that agents uphold standards of quality, safety, and compliance.

- Optimize show and idiosyncratic acquisition successful production.

- Maintain spot and accountability successful AI systems.

With the emergence of complex, multi-agent and multi-modal systems, observability is indispensable for delivering AI that is not lone effective, but besides transparent, safe, and aligned with organizational values. Observability empowers teams to physique with assurance and standard responsibly by providing visibility into however agents behave, marque decisions, and respond to real-world scenarios crossed their lifecycle.

What is cause observability?

Agent observability is the signifier of achieving deep, actionable visibility into the interior workings, decisions, and outcomes of AI agents passim their lifecycle—from improvement and investigating to deployment and ongoing operation. Key aspects of cause observability include:

- Continuous monitoring: Tracking cause actions, decisions, and interactions successful existent clip to aboveground anomalies, unexpected behaviors, oregon show drift.

- Tracing: Capturing elaborate execution flows, including however agents crushed done tasks, prime tools, and collaborate with different agents oregon services. This helps reply not conscionable “what happened,” but “why and however did it happen?”

- Logging: Records cause decisions, instrumentality calls, and interior authorities changes to enactment debugging and behaviour investigation successful agentic AI workflows.

- Evaluation: Systematically assessing cause outputs for quality, safety, compliance, and alignment with idiosyncratic intent—using some automated and human-in-the-loop methods.

- Governance: Enforcing policies and standards to guarantee agents run ethically, safely, and successful accordance with organizational and regulatory requirements.

Traditional observability vs cause observability

Traditional observability relies connected 3 foundational pillars: metrics, logs, and traces. These supply visibility into strategy performance, assistance diagnose failures, and enactment root-cause analysis. They are well-suited for accepted bundle systems wherever the absorption is connected infrastructure health, latency, and throughput.

However, AI agents are non-deterministic and present caller dimensions—autonomy, reasoning, and dynamic determination making—that necessitate a much precocious observability framework. Agent observability builds connected accepted methods and adds 2 captious components: evaluations and governance. Evaluations assistance teams measure however good agents resoluteness idiosyncratic intent, adhere to tasks, and usage tools effectively. Agent governance tin guarantee agents run safely, ethically, and successful compliance with organizational standards.

This expanded attack enables deeper visibility into cause behavior—not conscionable what agents do, but wherefore and however they bash it. It supports continuous monitoring crossed the cause lifecycle, from improvement to production, and is indispensable for gathering trustworthy, high-performing AI systems astatine scale.

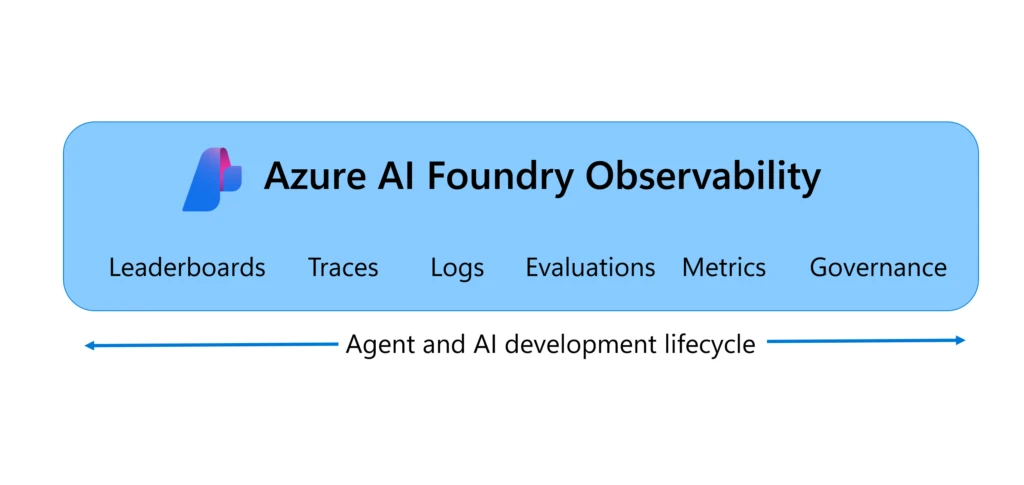

Azure AI Foundry Observability provides end-to-end cause observability

Azure AI Foundry Observability is simply a unified solution for evaluating, monitoring, tracing, and governing the quality, performance, and information of your AI systems extremity to extremity successful Azure AI Foundry—all built into your AI development loop. From exemplary enactment to real-time debugging, Foundry Observability capabilities empower teams to vessel production-grade AI with assurance and speed. It’s observability, reimagined for the endeavor AI era.

With built-in capabilities similar the Agents Playground evaluations, Azure AI Red Teaming Agent, and Azure Monitor integration, Foundry Observability brings valuation and information into each measurement of the cause lifecycle. Teams tin hint each cause travel with afloat execution context, simulate adversarial scenarios, and show unrecorded postulation with customizable dashboards. Seamless CI/CD integration enables continuous valuation connected each perpetrate and governance enactment with Microsoft Purview, Credo AI, and Saidot integration helps alteration alignment with regulatory frameworks similar the EU AI Act—making it easier to physique responsible, production-grade AI astatine scale.

Five champion practices for cause observability

1. Pick the close exemplary utilizing benchmark driven leaderboards

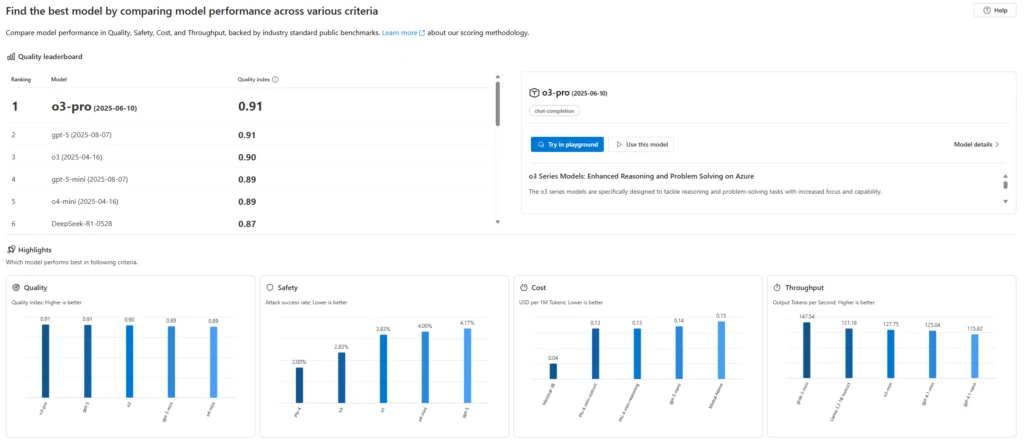

Every cause needs a exemplary and choosing the close exemplary is foundational for cause success. While readying your AI agent, you request to determine which exemplary would beryllium the champion for your usage lawsuit successful presumption of safety, quality, and cost.

You tin prime the champion exemplary by either evaluating the exemplary connected your ain information oregon usage Azure AI Foundry’s model leaderboards to comparison instauration models out-of-the-box by quality, cost, and performance—backed by manufacture benchmarks. With Foundry exemplary leaderboards, you tin find exemplary leaders successful assorted enactment criteria and scenarios, visualize trade-offs among the criteria (e.g., prime vs outgo oregon safety), and dive into elaborate metrics to marque confident, data-driven decisions.

Azure AI Foundry’s exemplary leaderboards gave america the assurance to standard lawsuit solutions from experimentation to deployment. Comparing models broadside by broadside helped customers prime the champion fit—balancing performance, safety, and outgo with confidence.

—Mark Luquire, EY Global Microsoft Alliance Co-Innovation Leader, Managing Director, Ernst & Young, LLP*2. Evaluate agents continuously successful improvement and production

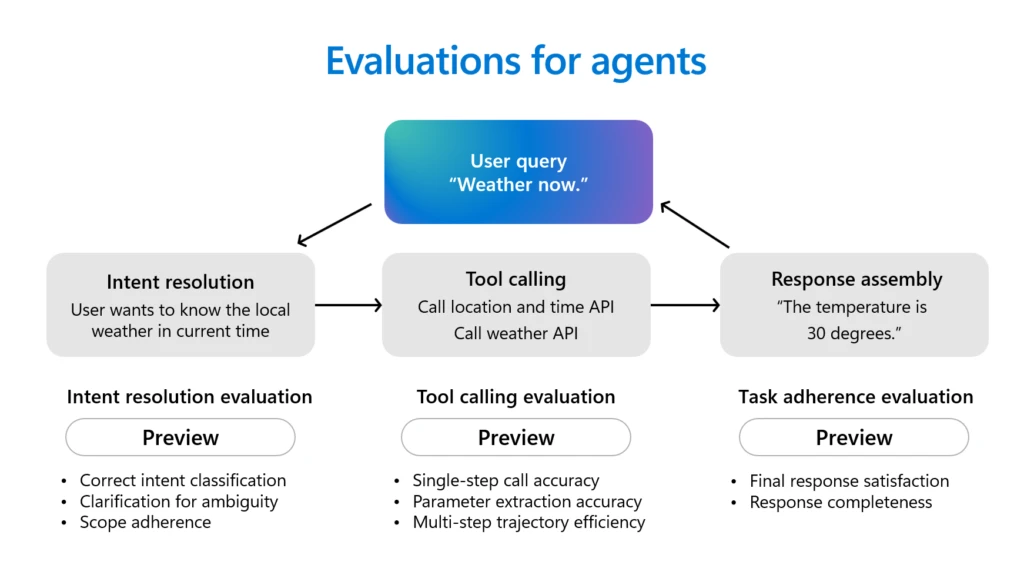

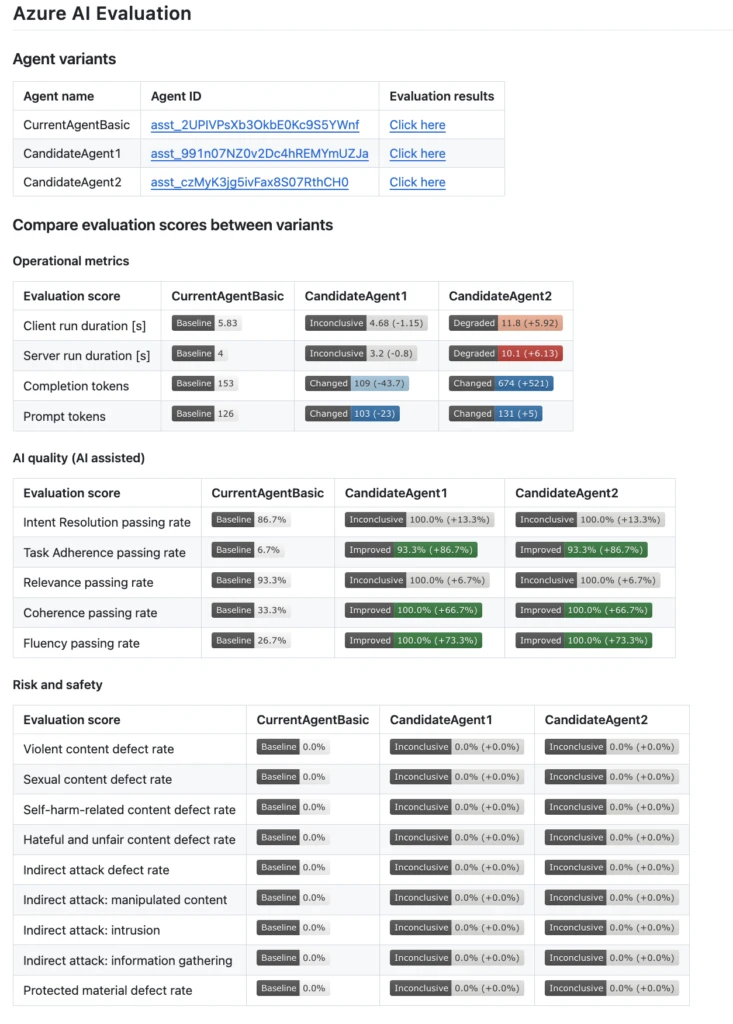

Agents are almighty productivity assistants. They tin plan, marque decisions, and execute actions. Agents typically first reason done idiosyncratic intents successful conversations, select the close tools to telephone and fulfill the idiosyncratic requests, and complete assorted tasks according to their instructions. Before deploying agents, it’s captious to measure their behaviour and performance.

Azure AI Foundry makes cause valuation easier with respective cause evaluators supported out-of-the-box, including Intent Resolution (how accurately the cause identifies and addresses idiosyncratic intentions), Task Adherence (how good the cause follows done connected identified tasks), Tool Call Accuracy (how efficaciously the cause selects and uses tools), and Response Completeness (whether the agent’s effect includes each indispensable information). Beyond cause evaluators, Azure AI Foundry besides provides a broad suite of evaluators for broader assessments of AI quality, risk, and safety. These see prime dimensions specified as relevance, coherence, and fluency, along with comprehensive risk and safety checks that measure for codification vulnerabilities, violence, self-harm, sexual content, hate, unfairness, indirect attacks, and the usage of protected materials. The Azure AI Foundry Agents Playground brings these valuation and tracing tools unneurotic successful 1 place, letting you test, debug, and amended agentic AI efficiently.

The robust valuation tools successful Azure AI Foundry assistance our developers continuously measure the show and accuracy of our AI models, including gathering standards for coherence, fluency, and groundedness.

—Amarender Singh, Director, AI, Hughes Network Systems3. Integrate evaluations into your CI/CD pipelines

Automated evaluations should beryllium portion of your CI/CD pipeline truthful each codification alteration is tested for prime and information earlier release. This attack helps teams drawback regressions aboriginal and tin assistance guarantee agents stay reliable arsenic they evolve.

Azure AI Foundry integrates with your CI/CD workflows utilizing GitHub Actions and Azure DevOps extensions, enabling you to auto-evaluate agents connected each commit, comparison versions utilizing built-in quality, performance, and information metrics, and leverage assurance intervals and value tests to enactment decisions—helping to guarantee that each iteration of your cause is accumulation ready.

We’ve integrated Azure AI Foundry evaluations straight into our GitHub Actions workflow, truthful each codification alteration to our AI agents is automatically tested earlier deployment. This setup helps america rapidly drawback regressions and support precocious prime arsenic we iterate connected our models and features.

—Justin Layne Hofer, Senior Software Engineer, Veeam4. Scan for vulnerabilities with AI reddish teaming earlier production

Security and information are non-negotiable. Before deployment, proactively trial agents for information and information risks by simulating adversarial attacks. Red teaming helps uncover vulnerabilities that could beryllium exploited successful real-world scenarios, strengthening cause robustness.

Azure AI Foundry’s AI Red Teaming Agent automates adversarial testing, measuring hazard and generating readiness reports. It enables teams to simulate attacks and validate some idiosyncratic cause responses and analyzable workflows for accumulation readiness.

Accenture is already investigating the Microsoft AI Red Teaming Agent, which simulates adversarial prompts and detects exemplary and exertion hazard posture proactively. This instrumentality volition assistance validate not lone idiosyncratic cause responses, but besides afloat multi-agent workflows successful which cascading logic mightiness nutrient unintended behaviour from a azygous adversarial user. Red teaming lets america simulate worst-case scenarios earlier they ever deed production. That changes the game.

—Nayanjyoti Paul, Associate Director and Chief Azure Architect for Gen AI, Accenture5. Monitor agents successful accumulation with tracing, evaluations, and alerts

Continuous monitoring aft deployment is indispensable to drawback issues, show drift, oregon regressions successful existent time. Using evaluations, tracing, and alerts helps support cause reliability and compliance passim its lifecycle.

Azure AI Foundry observability enables continuous agentic AI monitoring done a unified dashboard powered by Azure Monitor Application Insights and Azure Workbooks. This dashboard provides real-time visibility into performance, quality, safety, and assets usage, allowing you to tally continuous evaluations connected unrecorded traffic, acceptable alerts to observe drift oregon regressions, and hint each valuation effect for full-stack observability. With seamless navigation to Azure Monitor, you tin customize dashboards, acceptable up precocious diagnostics, and respond swiftly to incidents—helping to guarantee you enactment up of issues with precision and speed.

Security is paramount for our ample endeavor customers, and our collaboration with Microsoft allays immoderate concerns. With Azure AI Foundry, we person the desired observability and power implicit our infrastructure and tin present a highly unafraid situation to our customers.

—Ahmad Fattahi, Sr. Director, Data Science, SpotfireGet started with Azure AI Foundry for end-to-end cause observability

To summarize, accepted observability includes metrics, logs, and traces. Agent Observability needs metrics, traces, logs, evaluations, and governance for afloat visibility. Azure AI Foundry Observability is simply a unified solution for agent governance, evaluation, tracing, and monitoring—all built into your AI development lifecycle. With tools similar the Agents Playground, creaseless CI/CD, and governance integrations, Azure AI Foundry Observability empowers teams to guarantee their AI agents are reliable, safe, and accumulation ready. Learn much astir Azure AI Foundry Observability and get afloat visibility into your agents today!

What’s next

In portion 4 of the Agent Factory series, we’ll absorption connected however you tin spell from prototype to accumulation faster with developer tools and accelerated cause development.

Did you miss these posts successful the series?

- Agent Factory: The caller epoch of agentic AI—common usage cases and plan patterns.

- Agent Factory: Building your archetypal AI cause with the tools to present real-world outcomes.

*The views reflected successful this work are the views of the talker and bash not needfully bespeak the views of the planetary EY enactment oregon its subordinate firms.

7 months ago

106

7 months ago

106