Agents are lone arsenic susceptible arsenic the tools you springiness them—and lone arsenic trustworthy arsenic the governance down those tools.

This blog station is the 2nd retired of a six-part blog bid called Agent Factory which volition stock champion practices, plan patterns, and tools to assistance usher you done adopting and gathering agentic AI.

In the erstwhile blog, we explored 5 communal plan patterns of agentic AI—from instrumentality usage and reflection to planning, multi-agent collaboration, and adaptive reasoning. These patterns amusement however agents tin beryllium structured to execute reliable, scalable automation successful real-world environments.

Across the industry, we’re seeing a wide shift. Early experiments focused connected single-model prompts and static workflows. Now, the speech is astir extensibility—how to springiness agents a broad, evolving acceptable of capabilities without locking into 1 vendor oregon rewriting integrations for each caller need. Platforms are competing connected however rapidly developers can:

- Integrate with hundreds of APIs, services, information sources, and workflows.

- Reuse those integrations crossed antithetic teams and runtime environments.

- Maintain enterprise-grade power implicit who tin telephone what, when, and with what data.

The acquisition from the past twelvemonth of agentic AI improvement is simple: agents are lone arsenic susceptible arsenic the tools you springiness them—and lone arsenic trustworthy arsenic the governance down those tools.

Extensibility done unfastened standards

In the aboriginal stages of cause development, integrating tools was often a bespoke, platform-specific effort. Each model had its ain conventions for defining tools, passing data, and handling authentication. This created respective accordant blockers:

- Duplication of effort—the aforesaid interior API had to beryllium wrapped otherwise for each runtime.

- Brittle integrations—small changes to schemas oregon endpoints could interruption aggregate agents astatine once.

- Limited reusability—tools built for 1 squad oregon situation were hard to stock crossed projects oregon clouds.

- Fragmented governance—different runtimes enforced antithetic information and argumentation models.

As organizations began deploying agents crossed hybrid and multi-cloud environments, these inefficiencies became large obstacles. Teams needed a mode to standardize however tools are described, discovered, and invoked, careless of the hosting environment.

That’s wherever open protocols entered the conversation. Just arsenic HTTP transformed the web by creating a communal connection for clients and servers, unfastened protocols for agents purpose to marque tools portable, interoperable, and easier to govern.

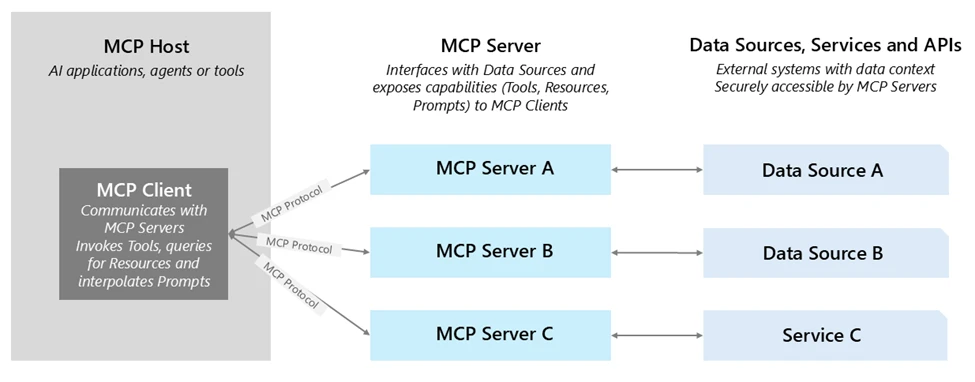

One of the astir promising examples is the Model Context Protocol (MCP)—a modular for defining instrumentality capabilities and I/O schemas truthful immoderate MCP-compliant cause tin dynamically observe and invoke them. With MCP:

- Tools are self-describing, making find and integration faster.

- Agents tin find and usage tools astatine runtime without manual wiring.

- Tools tin beryllium hosted anywhere—on-premises, successful a spouse cloud, oregon successful different concern unit—without losing governance.

Azure AI Foundry supports MCP, enabling you to bring existing MCP servers straight into your agents. This gives you the benefits of unfastened interoperability plus enterprise-grade security, observability, and management. Learn much astir MCP astatine MCP Dev Days.

Once you person a modular for portability done unfastened protocols similar MCP, the adjacent question becomes: what kinds of tools should your agents have, and however bash you signifier them truthful they tin present worth rapidly portion staying adaptable?

In Azure AI Foundry, we deliberation of this arsenic gathering an enterprise toolchain—a layered acceptable of capabilities that equilibrium speed (getting thing invaluable moving today), differentiation (capturing what makes your concern unique), and reach (connecting crossed each the systems wherever enactment really happens).

1. Built-in tools for accelerated value: Azure AI Foundry includes ready-to-use tools for communal endeavor needs: searching crossed SharePoint and information lake, executing Python for information analysis, performing multi-step web probe with Bing, and triggering browser automation tasks. These aren’t conscionable conveniences—they fto teams basal up functional, high-value agents successful days alternatively of weeks, without the friction of aboriginal integration work.

2. Custom tools for your competitory edge: Every enactment has proprietary systems and processes that can’t beryllium replicated by off-the-shelf tools. Azure AI Foundry makes it straightforward to wrapper these arsenic agentic AI tools—whether they’re APIs from your ERP, a manufacturing prime power system, oregon a partner’s service. By invoking them done OpenAPI oregon MCP, these tools go portable and discoverable crossed teams, projects, and adjacent clouds, portion inactive benefiting from Foundry’s identity, policy, and observability layers.

3. Connectors for maximum reach: Through Azure Logic Apps, Foundry tin link agents to implicit 1,400 SaaS and on-premises systems—CRM, ERP, ITSM, information warehouses, and more. This dramatically reduces integration lift, allowing you to plug into existing endeavor processes without gathering each connector from scratch.

One illustration of this toolchain successful enactment comes from NTT DATA, which built agents successful Azure AI Foundry that integrate Microsoft Fabric Data Agent alongside different endeavor tools. These agents let employees crossed HR, operations, and different functions to interact people with data—revealing real-time insights and enabling actions—reducing time-to-market by 50% and giving non‑technical users intuitive, self-service entree to endeavor intelligence.

Enterprise-grade absorption for tools

Extensibility indispensable beryllium paired with governance to determination from prototype to enterprise-ready automation. Azure AI Foundry addresses this with a secure-by-default attack to instrumentality management:

- Authentication and individuality successful built-in connectors: Enterprise-grade connectors—like SharePoint and Microsoft Fabric—already usage on-behalf-of (OBO) authentication. When an cause invokes these tools, Foundry ensures that the telephone respects the extremity user’s permissions via managed Entra IDs, preserving existing authorization rules. With Microsoft Entra Agent ID, each agentic task created successful Azure AI Foundry automatically appears successful an agent-specific exertion presumption wrong the Microsoft Entra admin center. This provides information teams with a unified directory presumption of each agents and cause applications they request to negociate crossed Microsoft. This integration marks the archetypal measurement toward standardizing governance for AI agents institution wide. While Entra ID is native, Azure AI Foundry besides supports integrations with outer individuality systems. Through federation, customers who usage providers specified arsenic Okta oregon Google Identity tin inactive authenticate agents and users to telephone tools securely.

- Custom tools with OpenAPI and MCP: OpenAPI-specified tools alteration seamless connectivity utilizing managed identities, API keys, oregon unauthenticated access. These tools tin beryllium registered straight successful Foundry, and align with modular API plan champion practices. Foundry is besides expanding MCP information to see stored credentials, project-level managed identities, and third-party OAuth flows, on with unafraid backstage networking—advancing toward a afloat enterprise-grade, end-to-end MCP integration model.

- API governance with Azure API Management (APIM): APIM provides a almighty power level for managing instrumentality calls: it enables centralized publishing, argumentation enforcement (authentication, complaint limits, payload validation), and monitoring. Additionally, you tin deploy self-hosted gateways wrong VNets oregon on-prem environments to enforce endeavor policies adjacent to backend systems. Complementing this, Azure API Center acts arsenic a centralized, design-time API inventory and find hub—allowing teams to register, catalog, and negociate backstage MCP servers alongside different APIs. These capabilities supply the aforesaid governance you expect for your APIs—extended to agentic AI tools without further engineering.

- Observability and auditability: Every instrumentality invocation successful Foundry—whether interior oregon external—is traced with step-level logging. This includes identity, instrumentality name, inputs, outputs, and outcomes, enabling continuous reliability monitoring and simplified auditing.

Enterprise-grade absorption ensures tools are unafraid and observable—but occurrence besides depends connected however you plan and run them from time one. Drawing connected Azure AI Foundry guidance and lawsuit experience, a fewer principles basal out:

- Start with the contract. Treat each instrumentality similar an API product. Define wide inputs, outputs, and mistake behaviors, and support schemas accordant crossed teams. Avoid overloading a azygous instrumentality with aggregate unrelated actions; smaller, single-purpose tools are easier to test, monitor, and reuse.

- Choose the close packaging. For proprietary APIs, determine aboriginal whether OpenAPI oregon MCP champion fits your needs. OpenAPI tools are straightforward for well-documented REST APIs, portion MCP tools excel erstwhile portability and cross-environment reuse are priorities.

- Centralize governance. Publish customized tools down Azure API Management oregon a self-hosted gateway truthful authentication, throttling, and payload inspection are enforced consistently. This keeps argumentation logic retired of instrumentality codification and makes changes easier to rotation out.

- Bind each enactment to identity. Always cognize which idiosyncratic oregon cause is invoking the tool. For built-in connectors, leverage individuality passthrough oregon OBO. For customized tools, usage Entra ID oregon the due API key/credential model, and use least-privilege access.

- Instrument early. Add tracing, logging, and valuation hooks earlier moving to production. Early observability lets you way show trends, observe regressions, and tune tools without downtime.

Following these practices ensures that the tools you integrate contiguous stay secure, portable, and maintainable arsenic your cause ecosystem grows.

What’s next

In portion 3 of the Agent Factory series, we’ll absorption connected observability for AI agents—how to hint each step, measure instrumentality performance, and show cause behaviour successful existent time. We’ll screen the built-in capabilities successful Azure AI Foundry, integration patterns with Azure Monitor, and champion practices for turning telemetry into continuous improvement.

Did you miss the archetypal station successful the series? Check it out: The caller epoch of agentic AI—common usage cases and plan patterns.

7 months ago

98

7 months ago

98